Asmbly Dash – part 3

Background

For this final iteration of my makerspace project, I wanted to lean into the remote assistance experience. It sort of dawned on me that you don’t really want folks operating heavy machinery to be looking at their phones assisting others. On the other hand, allow members to remotely assist from their homes/office seemed like an idea worth pursuing.

User studies

The user studies were an invaluable tool in crafting a user experience that was unintrusive without leaving users in the dark as to what was happening.

I knew that a one-to-one assistance model was the best. Allowing multiple chat threads or group threads would mean the user constantly explaining their issue or the steps the’ve taken with other helpers. However, once a assistance had been offered, I wasn’t sure what to do with all the request notifications still lingering on other users screens.

The first two options I experimented with were removing the request notifications when it had been answered by someone else, or updating the text to say it had been answered. Both of these fell short from a user experience perspective (users felt they missed the original message) and an accessibility perspective (WCAG 2.0 requires a minimum amount of time before removing notifications).

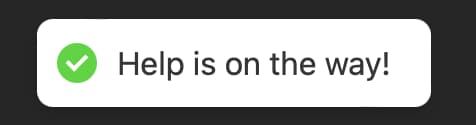

The final solution, proposed by a classmate, is one utilized by a tool called Be My Eyes. Be My Eyes allows users with sight impairment to connect with volunteers on their phones who can assist in describing what the phones camera is seeing. Their solution to this problem is to display a message indicating the request has been filled only if the volunteer engages with the request. In this way, the volunteer was already engaged and wouldn’t miss or be distracted by the new information.

Next steps

Initially I thought video chat would be a luxury feature, but after user testing I’m convinced it would be really valuable. It’s difficult to describe complex machinery and parts through text and a picture/video would really help in many remote assistance circumstances.